I'm currently an AI researcher at Reality Labs, Meta, working on cutting-edge challenges at the intersection of machine learning and computer vision. Before joining Meta, I spent more than eight years in the autonomous driving industry, contributing to research efforts at Cruise and Uber ATG. I earned my PhD from the University of Illinois Urbana-Champaign, where my research focused on computer vision.

Publications

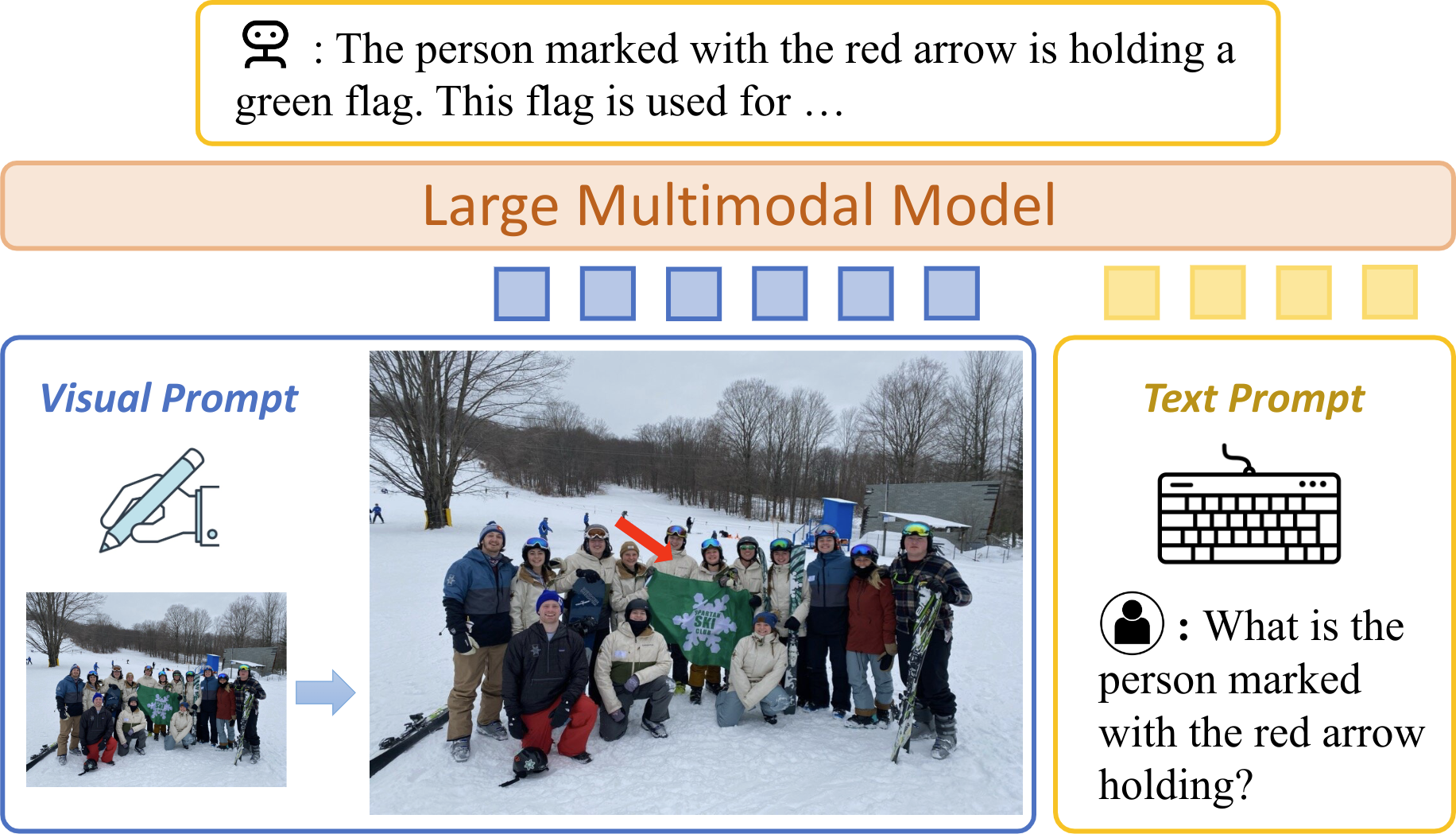

Generative Data Mining with Longtail-Guided Diffusion

D. S. Hayden, M. Ye, T. Garipov, G. P. Meyer, C. Vondrick, Z. Chen, Y. Chai, E. M. Wolff, S. Srinivasa

International Conference on Machine Learning (ICML), 2025

PDF

D. S. Hayden, M. Ye, T. Garipov, G. P. Meyer, C. Vondrick, Z. Chen, Y. Chai, E. M. Wolff, S. Srinivasa

International Conference on Machine Learning (ICML), 2025

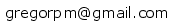

Cohere3D: Exploiting Temporal Coherence for Unsupervised Representation Learning of Vision-based Autonomous Driving

Y. Xie, H. Chen, G. P. Meyer, Y. J. Lee, E. M. Wolff, M. Tomizuka, W. Zhan, Y. Chai, X. Huang

IEEE International Conference on Robotics and Automation (ICRA), 2025

PDF

Y. Xie, H. Chen, G. P. Meyer, Y. J. Lee, E. M. Wolff, M. Tomizuka, W. Zhan, Y. Chai, X. Huang

IEEE International Conference on Robotics and Automation (ICRA), 2025

VLMine: Long-Tail Data Mining with Vision Language Models

M. Ye, G. P. Meyer, Z. Zhang, D. Park, S. K. Mustikovela, Y. Chai, E. M. Wolff

IEEE/CVF Winter Conference on Applications of Computer Vision Workshops (WACVW), 2025

PDF

M. Ye, G. P. Meyer, Z. Zhang, D. Park, S. K. Mustikovela, Y. Chai, E. M. Wolff

IEEE/CVF Winter Conference on Applications of Computer Vision Workshops (WACVW), 2025

VLM-AD: End-to-End Autonomous Driving through Vision-Language Model Supervision

Y. Xu, Y. Hu, Z. Zhang, G. P. Meyer, S. K. Mustikovela, S. Srinivasa, E. M. Wolff, X. Huang

Arxiv, 2024

PDF

Y. Xu, Y. Hu, Z. Zhang, G. P. Meyer, S. K. Mustikovela, S. Srinivasa, E. M. Wolff, X. Huang

Arxiv, 2024

VLM-KD: Knowledge Distillation from VLM for Long-Tail Visual Recognition

Z. Zhang, G. P. Meyer, Z. Lu, A. Shrivastava, A. Ravichandran, E. M. Wolff

Arxiv, 2024

PDF

Z. Zhang, G. P. Meyer, Z. Lu, A. Shrivastava, A. Ravichandran, E. M. Wolff

Arxiv, 2024

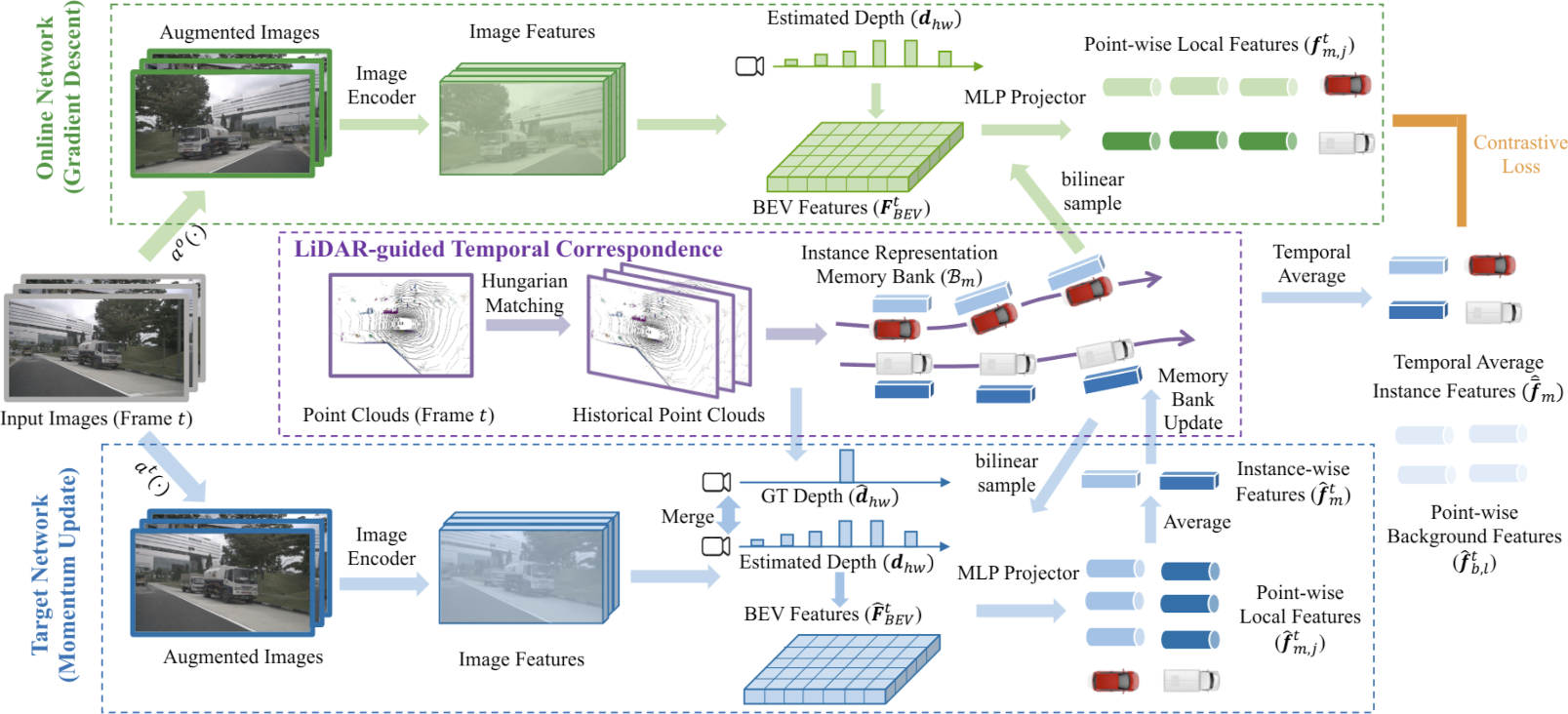

ViP-LLaVA: Making Large Multimodal Models Understand Arbitrary Visual Prompts

M. Cai, H. Liu, D. Park, S. K. Mustikovela, G. P. Meyer, Y. Chai, Y. J. Lee

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024

PDF Project Page

M. Cai, H. Liu, D. Park, S. K. Mustikovela, G. P. Meyer, Y. Chai, Y. J. Lee

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024

PDF Project Page

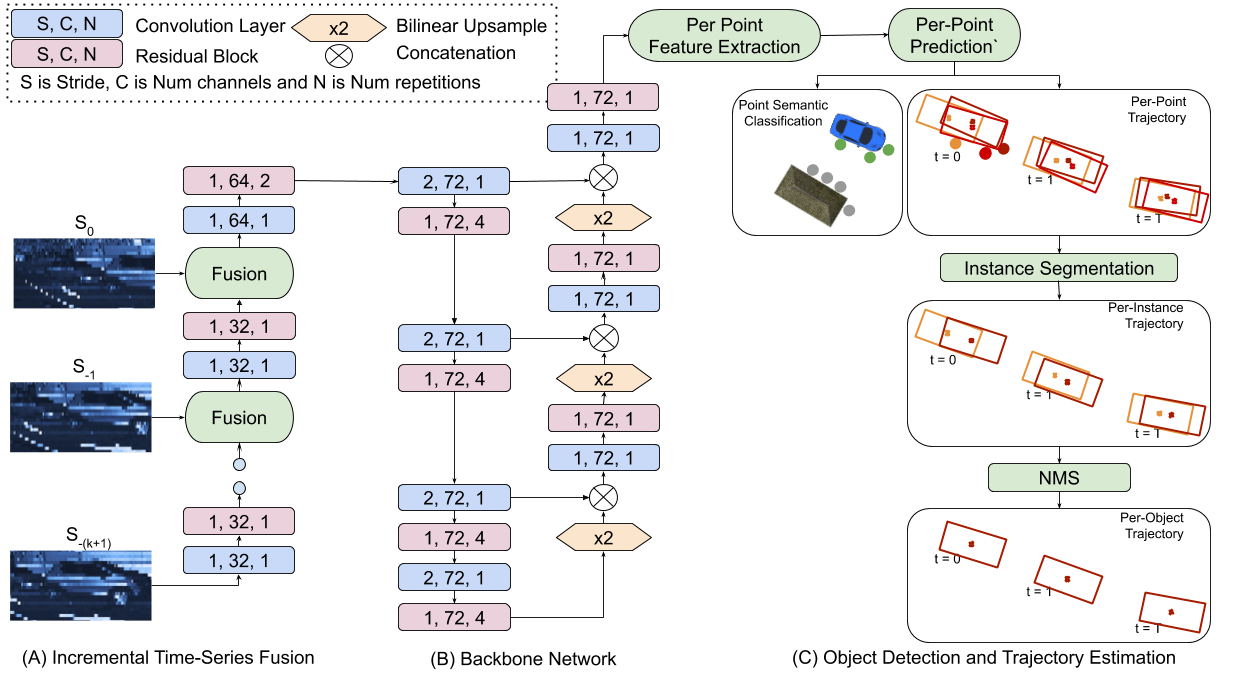

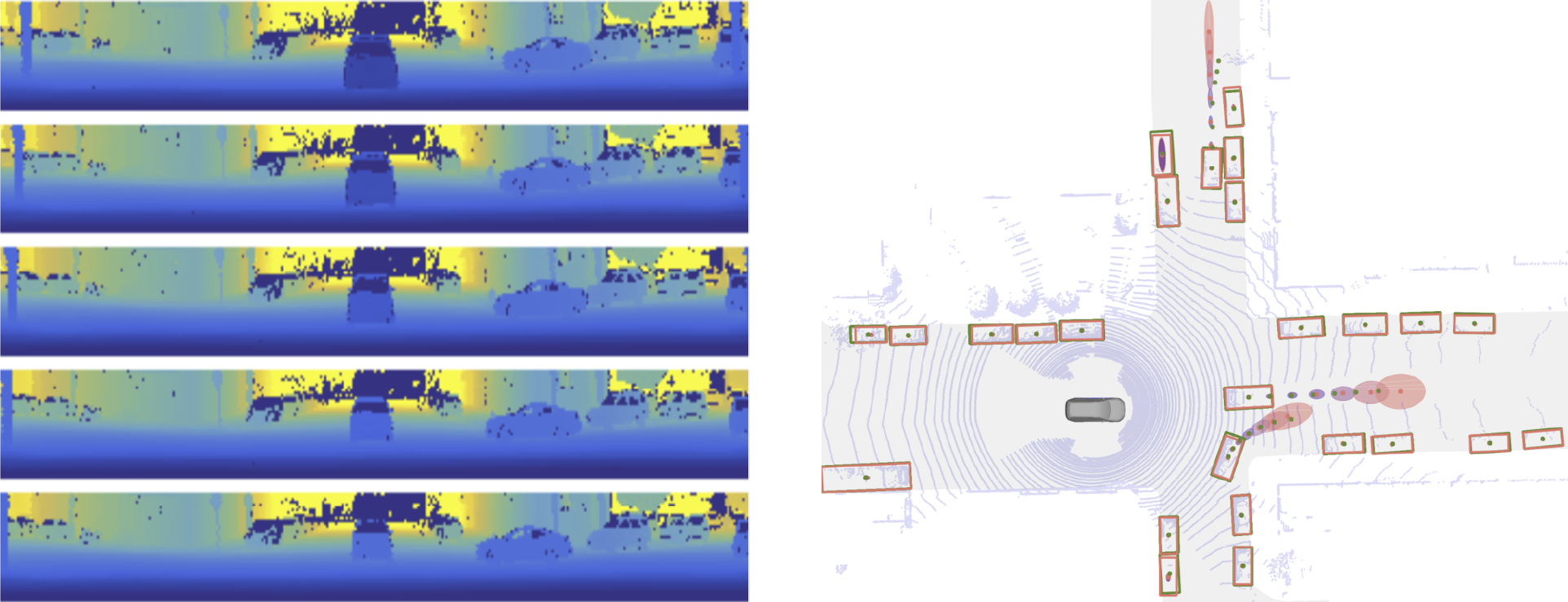

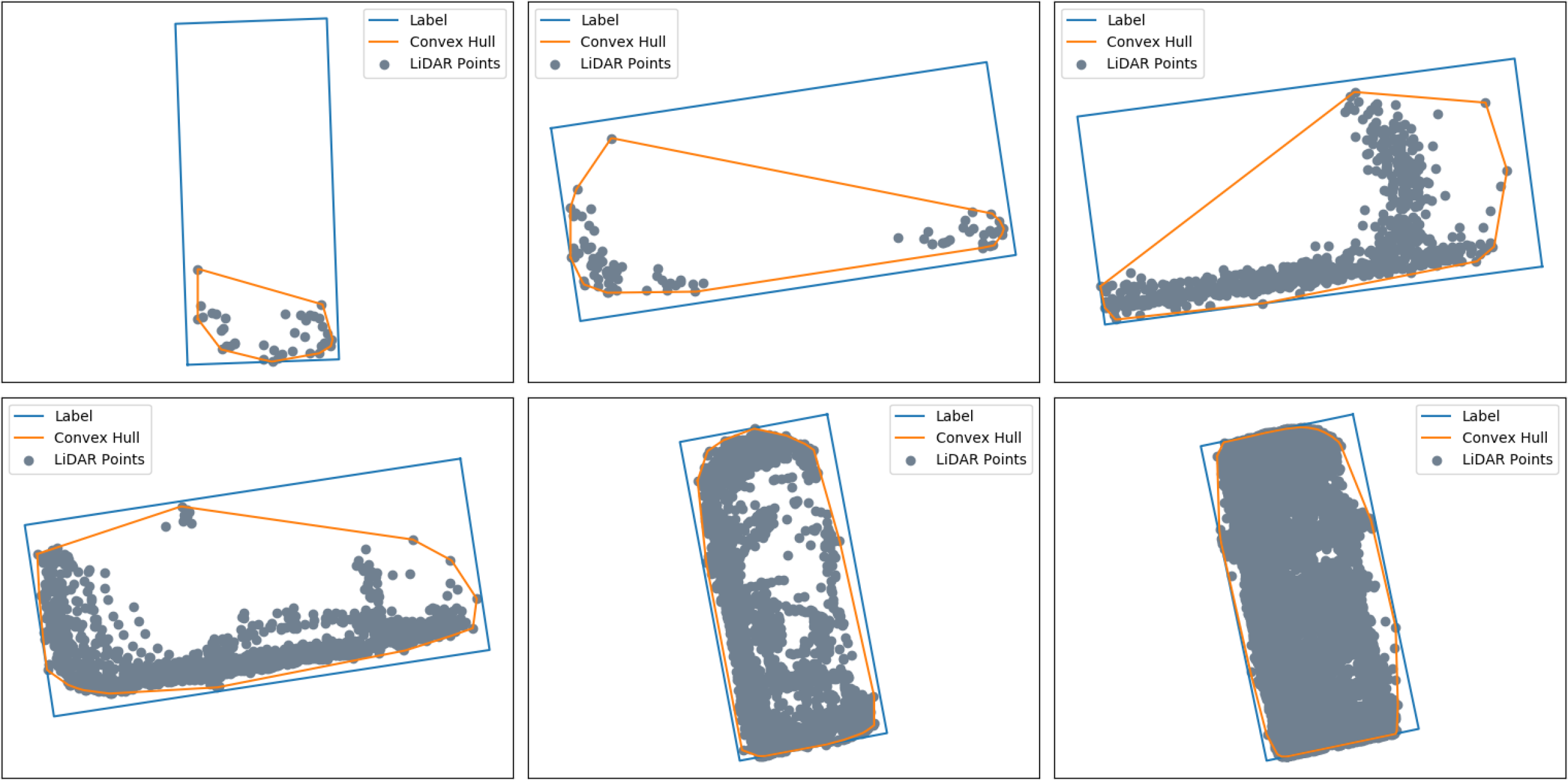

RV-FuseNet: Range View Based Fusion of Time-Series LiDAR Data for Joint 3D Object Detection and Motion Forecasting

A. Laddha, S. Gautam, G. P. Meyer, C. Vallespi-Gonzalez, C. K. Wellington

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2021

PDF

A. Laddha, S. Gautam, G. P. Meyer, C. Vallespi-Gonzalez, C. K. Wellington

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2021

SDVTracker: Real-Time Multi-Sensor Association and Tracking for Self-Driving Vehicles

S. Gautam, G. P. Meyer, C. Vallespi-Gonzalez, B. C. Becker

IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), 2021

PDF

S. Gautam, G. P. Meyer, C. Vallespi-Gonzalez, B. C. Becker

IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), 2021

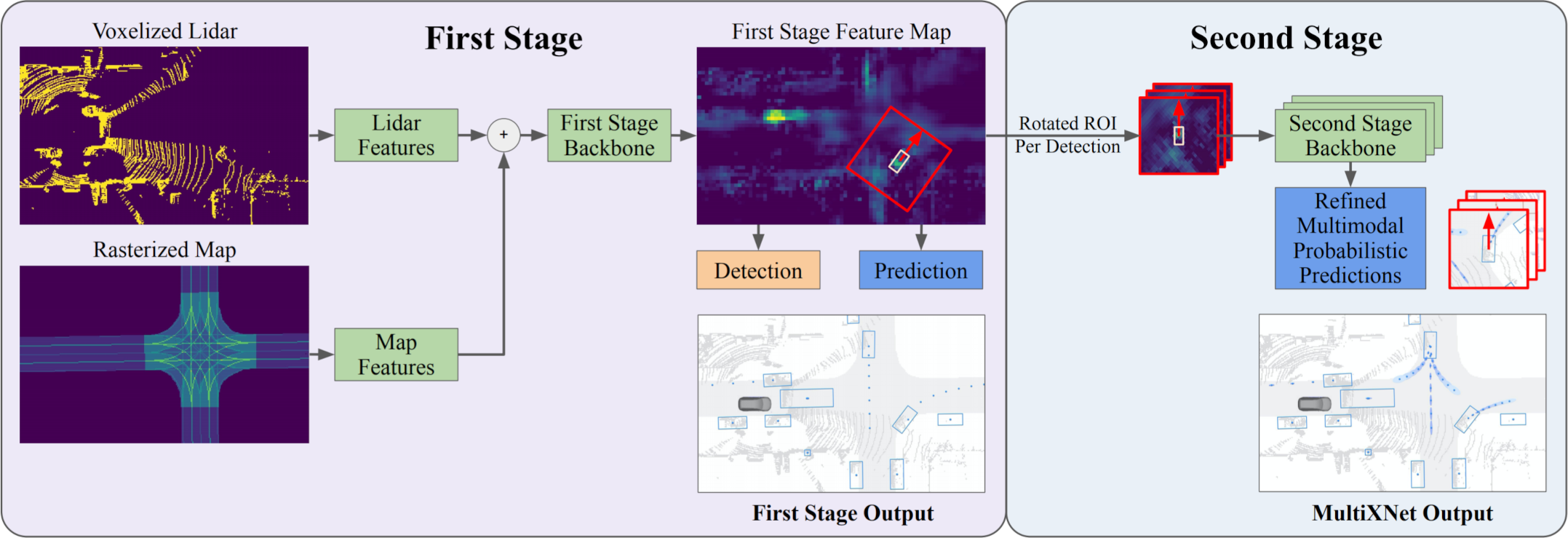

MultiXNet: Multiclass Multistage Multimodal Motion Prediction

N. Djuric, H. Cui, Z. Su, S. Wu, H. Wang, F.-C. Chou, L. San Martin, S. Feng, R. Hu, Y. Xu, A. Dayan, S. Zhang, B. C. Becker, G. P. Meyer, C. Vallespi-Gonzalez, C. K. Wellington

IEEE Intelligent Vehicles Symposium (IV), 2021

PDF

N. Djuric, H. Cui, Z. Su, S. Wu, H. Wang, F.-C. Chou, L. San Martin, S. Feng, R. Hu, Y. Xu, A. Dayan, S. Zhang, B. C. Becker, G. P. Meyer, C. Vallespi-Gonzalez, C. K. Wellington

IEEE Intelligent Vehicles Symposium (IV), 2021

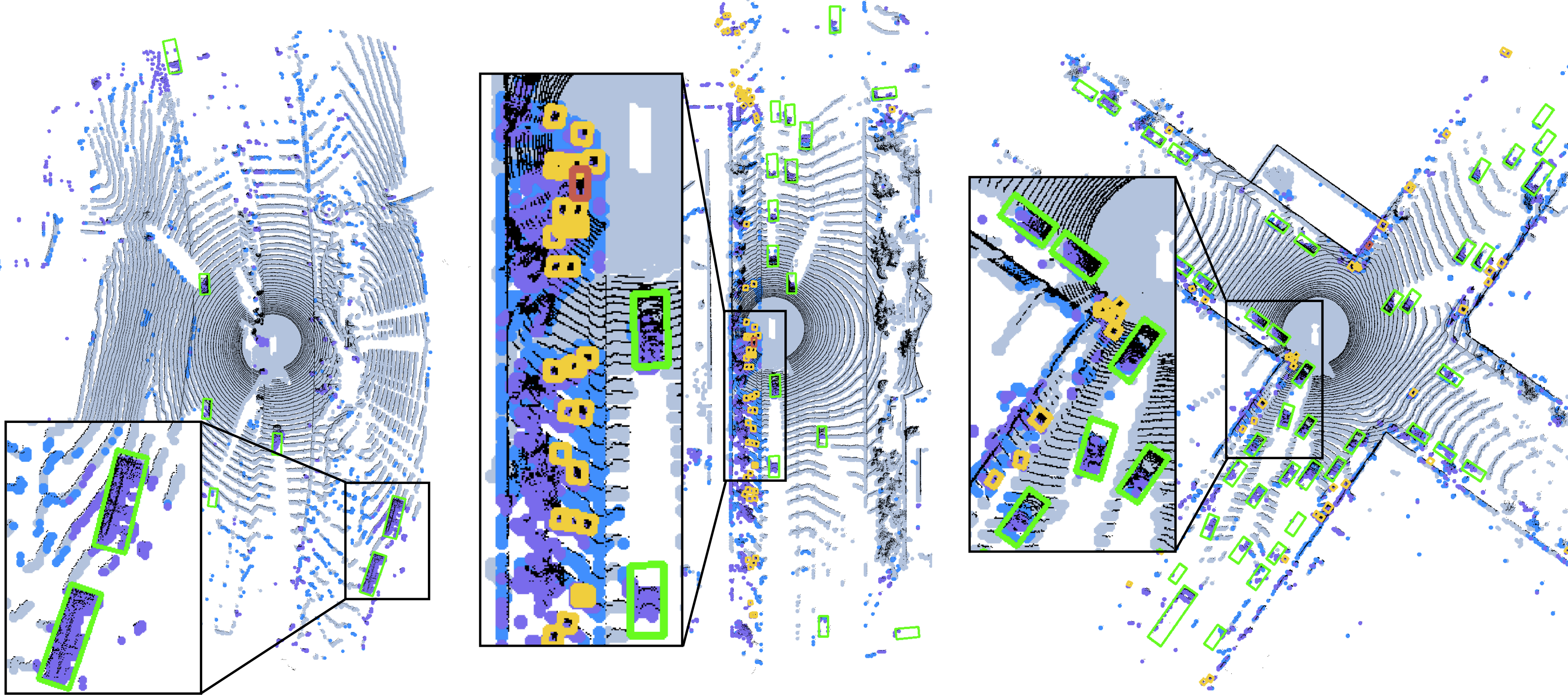

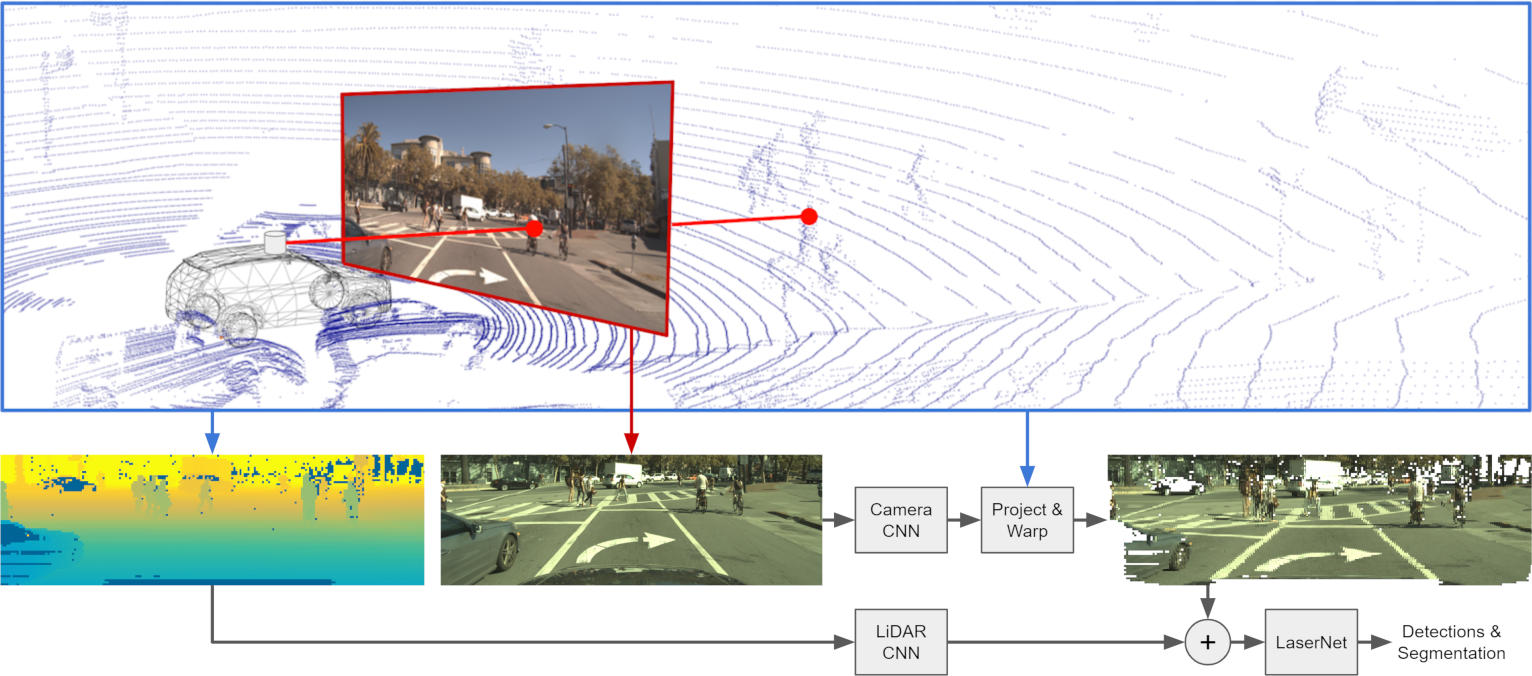

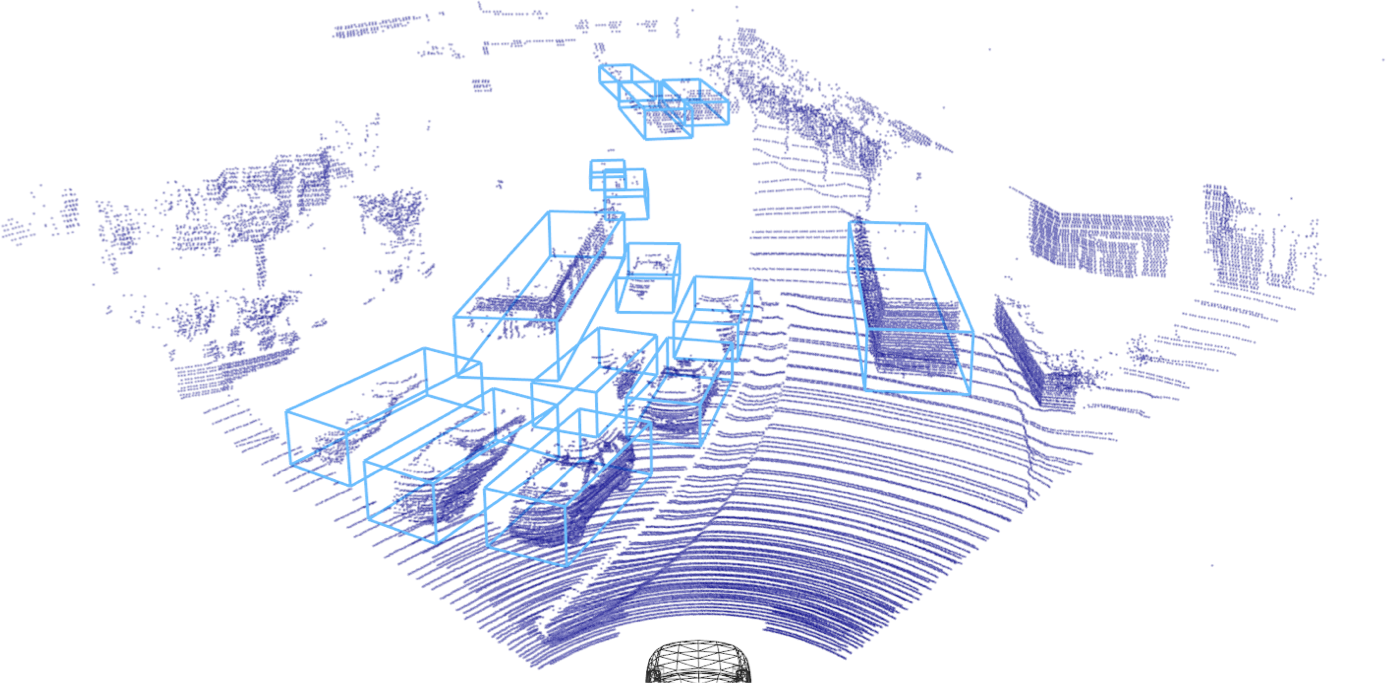

LaserFlow: Efficient and Probabilistic Object Detection and Motion Forecasting

G. P. Meyer, J. Charland, S. Pandey, A. Laddha, S. Gautam, C. Vallespi-Gonzalez, C. K. Wellington

IEEE Robotics and Automation Letters (RA-L), 2020

Best Paper Award

PDF Video

G. P. Meyer, J. Charland, S. Pandey, A. Laddha, S. Gautam, C. Vallespi-Gonzalez, C. K. Wellington

IEEE Robotics and Automation Letters (RA-L), 2020

Best Paper Award

PDF Video

Real-time 3D Face Verification with a Consumer Depth Camera

G. P. Meyer and M. N. Do

Conference on Computer and Robot Vision (CRV), 2018

PDF

G. P. Meyer and M. N. Do

Conference on Computer and Robot Vision (CRV), 2018

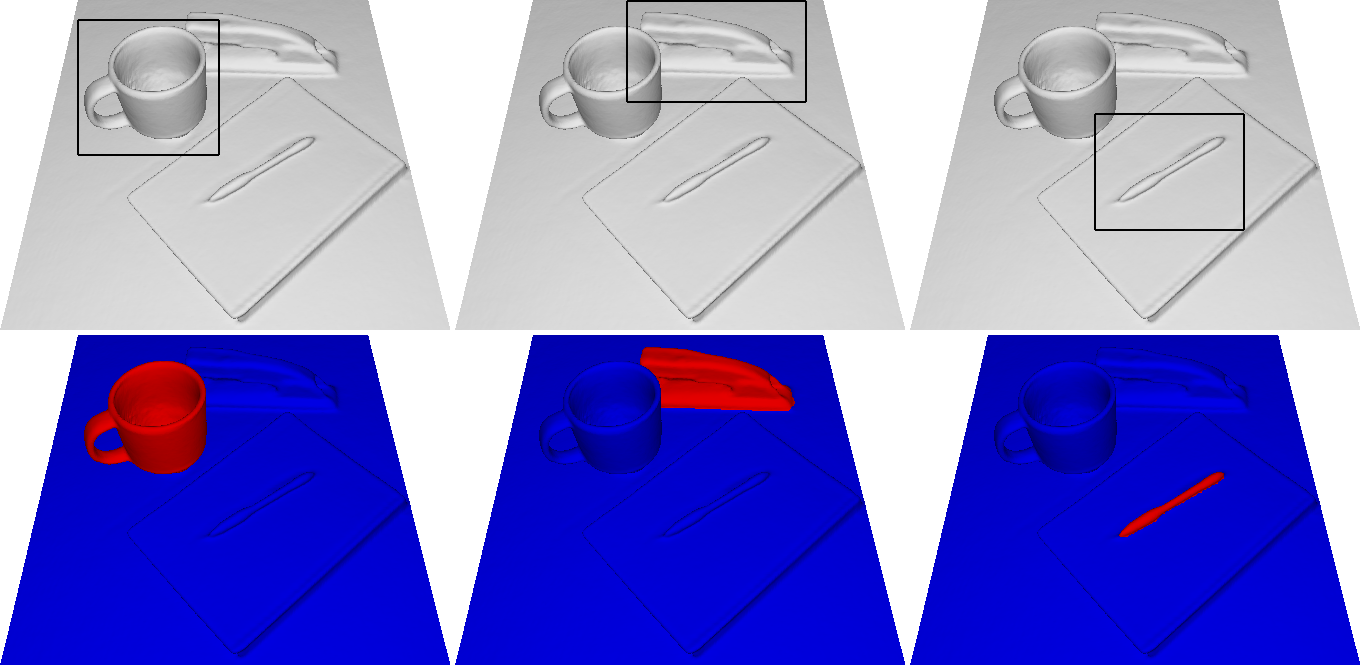

3D GrabCut: Interactive Foreground Extraction for Reconstructed 3D Scenes

G. P. Meyer and M. N. Do

Eurographics Workshop on 3D Object Retrieval (3DOR), 2015

PDF

G. P. Meyer and M. N. Do

Eurographics Workshop on 3D Object Retrieval (3DOR), 2015